mirror of

https://github.com/qodo-ai/pr-agent.git

synced 2025-12-12 02:45:18 +00:00

Compare commits

425 commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

bf5da9a9fb | ||

|

|

ede3f82143 | ||

|

|

5ec92b3535 | ||

|

|

4a67e23eab | ||

|

|

3ce4780e38 | ||

|

|

edd9ef9d4f | ||

|

|

e661147a1d | ||

|

|

d8dcc3ca34 | ||

|

|

f7a4f3fc8b | ||

|

|

0bbad14c70 | ||

|

|

4c5d3d6a6e | ||

|

|

a98eced418 | ||

|

|

6d22618756 | ||

|

|

8dbc53271e | ||

|

|

79e0ff03fa | ||

|

|

d36ad319f7 | ||

|

|

2f4b71da83 | ||

|

|

7b365b8d1c | ||

|

|

7de5ac74ca | ||

|

|

bfc82390dd | ||

|

|

a2b5ae87d7 | ||

|

|

d141e4ff9d | ||

|

|

8bd134e30c | ||

|

|

a84ba36cf4 | ||

|

|

3d54b501b2 | ||

|

|

a2fd3cc190 | ||

|

|

f2bbf708f2 | ||

|

|

5f8ac3d8cf | ||

|

|

9ee7ba3919 | ||

|

|

c728a486b4 | ||

|

|

8d3f3bca45 | ||

|

|

8a0759f835 | ||

|

|

f88b7ffb4d | ||

|

|

51084b4617 | ||

|

|

40ff5db659 | ||

|

|

b7874e6e5f | ||

|

|

1d7c82d68c | ||

|

|

fc7d614552 | ||

|

|

ec784874f9 | ||

|

|

eebdeea9f9 | ||

|

|

cb1c82073b | ||

|

|

335dadd0ac | ||

|

|

33744d9544 | ||

|

|

bf1cc50ece | ||

|

|

d67d053ae5 | ||

|

|

e43bf05c6e | ||

|

|

f2ba770558 | ||

|

|

3dd373a77e | ||

|

|

b9c6a2c747 | ||

|

|

a6e11f60ce | ||

|

|

604c17348d | ||

|

|

8c7712bf30 | ||

|

|

7969e4ba30 | ||

|

|

dcab8a893c | ||

|

|

2230da73fd | ||

|

|

f251a8a6cb | ||

|

|

76ed9156d3 | ||

|

|

9fd28e5680 | ||

|

|

d0bbc56480 | ||

|

|

c3bc789cd3 | ||

|

|

9252cb88d8 | ||

|

|

1527ea07cf | ||

|

|

5592e9d49d | ||

|

|

40633d7a9e | ||

|

|

d862d82475 | ||

|

|

5623fd14da | ||

|

|

26c7574b3b | ||

|

|

01a7c04263 | ||

|

|

a194ca65d2 | ||

|

|

584e87ae48 | ||

|

|

632c39962c | ||

|

|

be93651db8 | ||

|

|

fea8bc5150 | ||

|

|

58fe872a44 | ||

|

|

2866384931 | ||

|

|

3d66a9e8c3 | ||

|

|

65dea899ec | ||

|

|

7db9529d96 | ||

|

|

b5260a824f | ||

|

|

ccfc24ea85 | ||

|

|

063d0999c5 | ||

|

|

91dade811f | ||

|

|

3388ca34df | ||

|

|

10f960f43d | ||

|

|

7573dbbc1b | ||

|

|

ae9bb72f8e | ||

|

|

76d05e2279 | ||

|

|

544c962dd7 | ||

|

|

557a52b7f3 | ||

|

|

ed2c615d65 | ||

|

|

d35f051a58 | ||

|

|

7fc0a2330d | ||

|

|

5b5e4dd59f | ||

|

|

40ea7fcb6c | ||

|

|

96299b28bc | ||

|

|

0ee6ee6e7d | ||

|

|

60948ac3c8 | ||

|

|

0588459ca3 | ||

|

|

42f7c72857 | ||

|

|

393749dcb7 | ||

|

|

7981a2de2e | ||

|

|

6274852a88 | ||

|

|

fc1b757e92 | ||

|

|

3fd8210279 | ||

|

|

6c0087e0d9 | ||

|

|

7d7292b2d0 | ||

|

|

ae4c8f85f9 | ||

|

|

6dabc7b1ae | ||

|

|

9ad8e921b5 | ||

|

|

12eea7acf3 | ||

|

|

1fff1b0208 | ||

|

|

0989247d42 | ||

|

|

411f933a34 | ||

|

|

2a5a84367c | ||

|

|

5de82e379a | ||

|

|

532fbbe0a6 | ||

|

|

0f8606b899 | ||

|

|

c84a602d3a | ||

|

|

025b9bf44c | ||

|

|

785dde5e54 | ||

|

|

08a683c9a4 | ||

|

|

a53a9358ed | ||

|

|

03832818e6 | ||

|

|

ead49dc605 | ||

|

|

ae4fc71603 | ||

|

|

8258c2e774 | ||

|

|

dae9683770 | ||

|

|

5fc466bfbc | ||

|

|

d2c304eede | ||

|

|

a3217042f4 | ||

|

|

07cc7f9f42 | ||

|

|

604e5743f3 | ||

|

|

7597d27095 | ||

|

|

fb452b992d | ||

|

|

0b801d391c | ||

|

|

8f9ad2b38e | ||

|

|

10a7a0fbef | ||

|

|

e4d3f8fe7f | ||

|

|

08da41c929 | ||

|

|

0a3d655912 | ||

|

|

81525cd25a | ||

|

|

aafa835137 | ||

|

|

57ec112b4d | ||

|

|

f645ebb938 | ||

|

|

ae799d1327 | ||

|

|

9c3972d619 | ||

|

|

e0a4be1397 | ||

|

|

d01a7daaa7 | ||

|

|

5c3da5d83e | ||

|

|

9e2f1ed603 | ||

|

|

6d38a90ba1 | ||

|

|

6499b8e592 | ||

|

|

9bbed66028 | ||

|

|

74a7163eea | ||

|

|

e2cfc897ab | ||

|

|

5e28f8a1d1 | ||

|

|

2de4a9996f | ||

|

|

01f16f57ca | ||

|

|

bcfd2b3d6d | ||

|

|

34b562db22 | ||

|

|

4a6a55ca7c | ||

|

|

af803ce473 | ||

|

|

9ab0a611e2 | ||

|

|

a60b91e85d | ||

|

|

4958decc89 | ||

|

|

bb115432f2 | ||

|

|

f3287a9f67 | ||

|

|

9a2ba2d881 | ||

|

|

0090f7be81 | ||

|

|

c927d20b5e | ||

|

|

8e36f46dae | ||

|

|

de5c1adaa0 | ||

|

|

1c25420fb3 | ||

|

|

62a029d36a | ||

|

|

5162d847b3 | ||

|

|

6be8860959 | ||

|

|

406ef6a934 | ||

|

|

3980c0d9d5 | ||

|

|

385f8908ad | ||

|

|

e14beacc19 | ||

|

|

fd7f8f2596 | ||

|

|

df9cb3f635 | ||

|

|

4fb22beb3a | ||

|

|

03867d5962 | ||

|

|

96435a34f8 | ||

|

|

807ce6ec65 | ||

|

|

395e5a2e04 | ||

|

|

65457b2569 | ||

|

|

82feddbb95 | ||

|

|

fb73eb75f9 | ||

|

|

39add8d78a | ||

|

|

d406555f23 | ||

|

|

0d3c2e6b51 | ||

|

|

8e33b18e2c | ||

|

|

0b00812269 | ||

|

|

06bb64a0a2 | ||

|

|

dcfc7cc54f | ||

|

|

9383cdd520 | ||

|

|

dd6f56915b | ||

|

|

54ffb2d0a1 | ||

|

|

79253e8f60 | ||

|

|

8cef104784 | ||

|

|

6aa26d8c56 | ||

|

|

10cd8848d9 | ||

|

|

fdd91c6663 | ||

|

|

5d50cfcb34 | ||

|

|

a23b527101 | ||

|

|

b81b671ab1 | ||

|

|

2d858a43be | ||

|

|

d497c33c74 | ||

|

|

642c413f08 | ||

|

|

fb3ba64576 | ||

|

|

eb10d8c6d3 | ||

|

|

aa9bfb07af | ||

|

|

0dd4f682d9 | ||

|

|

9e4923aa79 | ||

|

|

497396eaeb | ||

|

|

3a87d3ef03 | ||

|

|

d0c0aaf1c7 | ||

|

|

0b962a16e4 | ||

|

|

1e285aca1f | ||

|

|

91cc4daada | ||

|

|

fc3045df95 | ||

|

|

12a01ef5f5 | ||

|

|

aae7726bb2 | ||

|

|

70a3059cbf | ||

|

|

c12cd2d02d | ||

|

|

c3b153cdc1 | ||

|

|

460b1a54b9 | ||

|

|

a8b8202567 | ||

|

|

af2b66bb51 | ||

|

|

7b4c50c717 | ||

|

|

86847c40ff | ||

|

|

6e543da4b4 | ||

|

|

ad2a96da50 | ||

|

|

359462abfe | ||

|

|

d4ab8c46e8 | ||

|

|

5e53466d97 | ||

|

|

3efe091bc8 | ||

|

|

6f7d81b086 | ||

|

|

755165e90c | ||

|

|

179fef796d | ||

|

|

0f7f135083 | ||

|

|

4bb7da1376 | ||

|

|

e2377a46f3 | ||

|

|

4448e03117 | ||

|

|

436b5c7a0a | ||

|

|

c7511ac0d5 | ||

|

|

a397ac1e63 | ||

|

|

4384740cb6 | ||

|

|

ae6576c06b | ||

|

|

940f82b695 | ||

|

|

441b4b3795 | ||

|

|

bdee6f9f36 | ||

|

|

1663eaad4a | ||

|

|

0ee115e19c | ||

|

|

aaba9b6b3c | ||

|

|

93aaa59b2d | ||

|

|

f1c068bc44 | ||

|

|

fffbee5b34 | ||

|

|

5e555d09c7 | ||

|

|

7f95e39361 | ||

|

|

730fa66594 | ||

|

|

f42dc28a55 | ||

|

|

7251e6df96 | ||

|

|

b01a2b5f4a | ||

|

|

f170af4675 | ||

|

|

96d408c42a | ||

|

|

65e71cb2ee | ||

|

|

9773afe155 | ||

|

|

0a8a263809 | ||

|

|

597f553dd5 | ||

|

|

4b6fcfe60e | ||

|

|

7cc4206b70 | ||

|

|

8906a81a2e | ||

|

|

6179eeca58 | ||

|

|

e8c73e7baa | ||

|

|

754d47f187 | ||

|

|

bec70dc96a | ||

|

|

fd32c83c29 | ||

|

|

7efeeb1de8 | ||

|

|

d7d4b7de89 | ||

|

|

2a37225574 | ||

|

|

e87fdd0ab5 | ||

|

|

c0d7fd8c36 | ||

|

|

380437b44f | ||

|

|

5933280417 | ||

|

|

8e0c5c8784 | ||

|

|

0e9cf274ef | ||

|

|

3aae48f09c | ||

|

|

c4dd07b3b8 | ||

|

|

8c7680d85d | ||

|

|

11fb6ccc7e | ||

|

|

3aaa727e05 | ||

|

|

6108f96bff | ||

|

|

5a00897cbe | ||

|

|

e12b27879c | ||

|

|

fac2141df3 | ||

|

|

1dbfd27d8e | ||

|

|

eaeee97535 | ||

|

|

71bbc52a99 | ||

|

|

4a8e9b79e8 | ||

|

|

efdb0f5744 | ||

|

|

28750c70e0 | ||

|

|

583ed10dca | ||

|

|

07d71f2d25 | ||

|

|

447a384aee | ||

|

|

d9eb0367cf | ||

|

|

85484899c3 | ||

|

|

00b5815785 | ||

|

|

9becad2eaf | ||

|

|

74df3f8bd5 | ||

|

|

4ab97d8969 | ||

|

|

6057812a20 | ||

|

|

598e2c731b | ||

|

|

0742d8052f | ||

|

|

1713cded21 | ||

|

|

e7268dd314 | ||

|

|

50c2578cfd | ||

|

|

5a56d11e16 | ||

|

|

31e25a5965 | ||

|

|

85e1e2d4ee | ||

|

|

2d8bee0d6d | ||

|

|

e0d7083768 | ||

|

|

dbf96ff749 | ||

|

|

5f9eee2d12 | ||

|

|

d4c5ab7bf0 | ||

|

|

5ae6d71c37 | ||

|

|

d30d077939 | ||

|

|

aa18d532cf | ||

|

|

92d36f6791 | ||

|

|

5e82d0a316 | ||

|

|

b7b198947c | ||

|

|

fb69313d87 | ||

|

|

017db5b63c | ||

|

|

3f632835c5 | ||

|

|

e2d71acb9d | ||

|

|

8127d52ab3 | ||

|

|

6a55bbcd23 | ||

|

|

12af211c13 | ||

|

|

34594e5436 | ||

|

|

17a90c536f | ||

|

|

ef2e69dbf3 | ||

|

|

38dc9a8fe5 | ||

|

|

c3f8ef939c | ||

|

|

34cc434459 | ||

|

|

a3d52f9cc7 | ||

|

|

f56728fbca | ||

|

|

19ddf1b2e4 | ||

|

|

23ce79589c | ||

|

|

8cd82b5dbf | ||

|

|

dba6846a04 | ||

|

|

317eb65cc2 | ||

|

|

9817602ab5 | ||

|

|

8a7b37ab4c | ||

|

|

3b071ccb4e | ||

|

|

822a253eb5 | ||

|

|

aeb1bd8dbc | ||

|

|

df8290a290 | ||

|

|

9e20373cb0 | ||

|

|

6dc38e5bca | ||

|

|

f7efa2c7c7 | ||

|

|

d77d2f86da | ||

|

|

2276caba39 | ||

|

|

12d3d6cc0b | ||

|

|

630712e24c | ||

|

|

e1a112d26e | ||

|

|

1b46d64d71 | ||

|

|

38eda2f7b6 | ||

|

|

53b9c8ec97 | ||

|

|

7e8e95b748 | ||

|

|

7f51661e64 | ||

|

|

70023d2c4f | ||

|

|

c5d34f5ad5 | ||

|

|

8d3e51c205 | ||

|

|

b213753420 | ||

|

|

2eb8019325 | ||

|

|

9115cb7d31 | ||

|

|

62adad8f12 | ||

|

|

56f7ae0b46 | ||

|

|

446c1fb49a | ||

|

|

7d50625bd6 | ||

|

|

bd9ddc8b86 | ||

|

|

dd4fe4dcb4 | ||

|

|

1c174f263f | ||

|

|

d860e17b3b | ||

|

|

f83970bc6b | ||

|

|

87a245bf9c | ||

|

|

2d1afc634e | ||

|

|

e4f477dae0 | ||

|

|

8e210f8ea0 | ||

|

|

9c87056263 | ||

|

|

3251f19a19 | ||

|

|

299a2c89d1 | ||

|

|

c7241ca093 | ||

|

|

1a00e61239 | ||

|

|

f166e7f497 | ||

|

|

8dc08e4596 | ||

|

|

ead2c9273f | ||

|

|

5062543325 | ||

|

|

35e865bfb6 | ||

|

|

abb576c84f | ||

|

|

2d61ff7b88 | ||

|

|

e75b863f3b | ||

|

|

849cb2ea5a | ||

|

|

ab80677e3a | ||

|

|

bd7017d630 | ||

|

|

6e2bc01294 | ||

|

|

22c16f586b | ||

|

|

a42e3331d8 | ||

|

|

e14834c84e | ||

|

|

915a1c563b | ||

|

|

bc99cf83dd | ||

|

|

d00cbd4da7 | ||

|

|

721ff18a63 | ||

|

|

1a003fe4d3 | ||

|

|

68f78e1a30 | ||

|

|

7759d1d3fc | ||

|

|

738f9856a4 | ||

|

|

fbce8cd2f5 | ||

|

|

ea63c8e63a | ||

|

|

d8fea6afc4 | ||

|

|

ff16e1cd26 | ||

|

|

9b5ae1a322 | ||

|

|

8b8464163d |

98 changed files with 4746 additions and 878 deletions

|

|

@ -34,7 +34,7 @@ This Code of Conduct applies both within project spaces and in public spaces whe

|

||||||

individual is representing the project or its community.

|

individual is representing the project or its community.

|

||||||

|

|

||||||

Instances of abusive, harassing, or otherwise unacceptable behavior may be reported by

|

Instances of abusive, harassing, or otherwise unacceptable behavior may be reported by

|

||||||

contacting a project maintainer at tal.r@qodo.ai . All complaints will

|

contacting a project maintainer at dana.f@qodo.ai . All complaints will

|

||||||

be reviewed and investigated and will result in a response that is deemed necessary and

|

be reviewed and investigated and will result in a response that is deemed necessary and

|

||||||

appropriate to the circumstances. Maintainers are obligated to maintain confidentiality

|

appropriate to the circumstances. Maintainers are obligated to maintain confidentiality

|

||||||

with regard to the reporter of an incident.

|

with regard to the reporter of an incident.

|

||||||

|

|

|

||||||

271

README.md

271

README.md

|

|

@ -1,261 +1,36 @@

|

||||||

<div align="center">

|

# 🧠 PR Agent LEGACY STATUS (open source)

|

||||||

|

Originally created and open-sourced by Qodo - the team behind next-generation AI Code Review.

|

||||||

|

|

||||||

<div align="center">

|

## 🚀 About

|

||||||

|

PR Agent was the first AI assistant for pull requests, built by Qodo, and contributed to the open-source community.

|

||||||

|

It represents the first generation of intelligent code review - the project that started Qodo’s journey toward fully AI-driven development, Code Review.

|

||||||

|

If you enjoy this project, you’ll love the next-level PR Agent - Qodo free tier version, which is faster, smarter, and built for today’s workflows.

|

||||||

|

|

||||||

<picture>

|

🚀 Qodo includes a free user trial, 250 tokens, bonus tokens for active contributors, and 50% more advanced features than this open-source version.

|

||||||

<source media="(prefers-color-scheme: dark)" srcset="https://www.qodo.ai/wp-content/uploads/2025/02/PR-Agent-Purple-2.png">

|

|

||||||

<source media="(prefers-color-scheme: light)" srcset="https://www.qodo.ai/wp-content/uploads/2025/02/PR-Agent-Purple-2.png">

|

|

||||||

<img src="https://codium.ai/images/pr_agent/logo-light.png" alt="logo" width="330">

|

|

||||||

|

|

||||||

</picture>

|

If you have an open-source project, you can get the Qodo paid version for free for your project, powered by Google Gemini 2.5 Pro – [https://www.qodo.ai/solutions/open-source/](https://www.qodo.ai/solutions/open-source/)

|

||||||

<br/>

|

|

||||||

|

|

||||||

[Installation Guide](https://qodo-merge-docs.qodo.ai/installation/) |

|

|

||||||

[Usage Guide](https://qodo-merge-docs.qodo.ai/usage-guide/) |

|

|

||||||

[Tools Guide](https://qodo-merge-docs.qodo.ai/tools/) |

|

|

||||||

[Qodo Merge](https://qodo-merge-docs.qodo.ai/overview/pr_agent_pro/) 💎

|

|

||||||

|

|

||||||

PR-Agent aims to help efficiently review and handle pull requests, by providing AI feedback and suggestions

|

|

||||||

</div>

|

|

||||||

|

|

||||||

[](https://chromewebstore.google.com/detail/qodo-merge-ai-powered-cod/ephlnjeghhogofkifjloamocljapahnl)

|

|

||||||

[](https://github.com/apps/qodo-merge-pro/)

|

|

||||||

[](https://github.com/apps/qodo-merge-pro-for-open-source/)

|

|

||||||

[](https://discord.com/invite/SgSxuQ65GF)

|

|

||||||

<a href="https://github.com/Codium-ai/pr-agent/commits/main">

|

|

||||||

<img alt="GitHub" src="https://img.shields.io/github/last-commit/Codium-ai/pr-agent/main?style=for-the-badge" height="20">

|

|

||||||

</a>

|

|

||||||

</div>

|

|

||||||

|

|

||||||

## Table of Contents

|

|

||||||

|

|

||||||

- [Getting Started](#getting-started)

|

|

||||||

- [News and Updates](#news-and-updates)

|

|

||||||

- [Overview](#overview)

|

|

||||||

- [See It in Action](#see-it-in-action)

|

|

||||||

- [Try It Now](#try-it-now)

|

|

||||||

- [Qodo Merge 💎](#qodo-merge-)

|

|

||||||

- [How It Works](#how-it-works)

|

|

||||||

- [Why Use PR-Agent?](#why-use-pr-agent)

|

|

||||||

- [Data Privacy](#data-privacy)

|

|

||||||

- [Contributing](#contributing)

|

|

||||||

- [Links](#links)

|

|

||||||

|

|

||||||

## Getting Started

|

|

||||||

|

|

||||||

### Try it Instantly

|

|

||||||

Test PR-Agent on any public GitHub repository by commenting `@CodiumAI-Agent /improve`

|

|

||||||

|

|

||||||

### GitHub Action

|

|

||||||

Add automated PR reviews to your repository with a simple workflow file using [GitHub Action setup guide](https://qodo-merge-docs.qodo.ai/installation/github/#run-as-a-github-action)

|

|

||||||

|

|

||||||

#### Other Platforms

|

|

||||||

- [GitLab webhook setup](https://qodo-merge-docs.qodo.ai/installation/gitlab/)

|

|

||||||

- [BitBucket app installation](https://qodo-merge-docs.qodo.ai/installation/bitbucket/)

|

|

||||||

- [Azure DevOps setup](https://qodo-merge-docs.qodo.ai/installation/azure/)

|

|

||||||

|

|

||||||

### CLI Usage

|

|

||||||

Run PR-Agent locally on your repository via command line: [Local CLI setup guide](https://qodo-merge-docs.qodo.ai/usage-guide/automations_and_usage/#local-repo-cli)

|

|

||||||

|

|

||||||

### Discover Qodo Merge 💎

|

|

||||||

Zero-setup hosted solution with advanced features and priority support

|

|

||||||

- [Intro and Installation guide](https://qodo-merge-docs.qodo.ai/installation/qodo_merge/)

|

|

||||||

- [Plans & Pricing](https://www.qodo.ai/pricing/)

|

|

||||||

|

|

||||||

|

|

||||||

## News and Updates

|

|

||||||

|

|

||||||

## Jun 3, 2025

|

|

||||||

|

|

||||||

Qodo Merge now offers a simplified free tier 💎.

|

|

||||||

Organizations can use Qodo Merge at no cost, with a [monthly limit](https://qodo-merge-docs.qodo.ai/installation/qodo_merge/#cloud-users) of 75 PR reviews per organization.

|

|

||||||

|

|

||||||

## May 17, 2025

|

|

||||||

|

|

||||||

- v0.29 was [released](https://github.com/qodo-ai/pr-agent/releases)

|

|

||||||

- `Qodo Merge Pull Request Benchmark` was [released](https://qodo-merge-docs.qodo.ai/pr_benchmark/). This benchmark evaluates and compares the performance of LLMs in analyzing pull request code.

|

|

||||||

- `Recent Updates and Future Roadmap` page was added to the [Qodo Merge Docs](https://qodo-merge-docs.qodo.ai/recent_updates/)

|

|

||||||

|

|

||||||

## Apr 30, 2025

|

|

||||||

|

|

||||||

A new feature is now available in the `/improve` tool for Qodo Merge 💎 - Chat on code suggestions.

|

|

||||||

|

|

||||||

<img width="512" alt="image" src="https://codium.ai/images/pr_agent/improve_chat_on_code_suggestions_ask.png" />

|

|

||||||

|

|

||||||

Read more about it [here](https://qodo-merge-docs.qodo.ai/tools/improve/#chat-on-code-suggestions).

|

|

||||||

|

|

||||||

## Apr 16, 2025

|

|

||||||

|

|

||||||

New tool for Qodo Merge 💎 - `/scan_repo_discussions`.

|

|

||||||

|

|

||||||

<img width="635" alt="image" src="https://codium.ai/images/pr_agent/scan_repo_discussions_2.png" />

|

|

||||||

|

|

||||||

Read more about it [here](https://qodo-merge-docs.qodo.ai/tools/scan_repo_discussions/).

|

|

||||||

|

|

||||||

## Overview

|

|

||||||

|

|

||||||

<div style="text-align:left;">

|

|

||||||

|

|

||||||

Supported commands per platform:

|

|

||||||

|

|

||||||

| | | GitHub | GitLab | Bitbucket | Azure DevOps | Gitea |

|

|

||||||

|---------------------------------------------------------|---------------------------------------------------------------------------------------------------------------------|:------:|:------:|:---------:|:------------:|:-----:|

|

|

||||||

| [TOOLS](https://qodo-merge-docs.qodo.ai/tools/) | [Describe](https://qodo-merge-docs.qodo.ai/tools/describe/) | ✅ | ✅ | ✅ | ✅ | ✅ |

|

|

||||||

| | [Review](https://qodo-merge-docs.qodo.ai/tools/review/) | ✅ | ✅ | ✅ | ✅ | ✅ |

|

|

||||||

| | [Improve](https://qodo-merge-docs.qodo.ai/tools/improve/) | ✅ | ✅ | ✅ | ✅ | ✅ |

|

|

||||||

| | [Ask](https://qodo-merge-docs.qodo.ai/tools/ask/) | ✅ | ✅ | ✅ | ✅ | |

|

|

||||||

| | ⮑ [Ask on code lines](https://qodo-merge-docs.qodo.ai/tools/ask/#ask-lines) | ✅ | ✅ | | | |

|

|

||||||

| | [Help Docs](https://qodo-merge-docs.qodo.ai/tools/help_docs/?h=auto#auto-approval) | ✅ | ✅ | ✅ | | |

|

|

||||||

| | [Update CHANGELOG](https://qodo-merge-docs.qodo.ai/tools/update_changelog/) | ✅ | ✅ | ✅ | ✅ | |

|

|

||||||

| | [Add Documentation](https://qodo-merge-docs.qodo.ai/tools/documentation/) 💎 | ✅ | ✅ | | | |

|

|

||||||

| | [Analyze](https://qodo-merge-docs.qodo.ai/tools/analyze/) 💎 | ✅ | ✅ | | | |

|

|

||||||

| | [Auto-Approve](https://qodo-merge-docs.qodo.ai/tools/improve/?h=auto#auto-approval) 💎 | ✅ | ✅ | ✅ | | |

|

|

||||||

| | [CI Feedback](https://qodo-merge-docs.qodo.ai/tools/ci_feedback/) 💎 | ✅ | | | | |

|

|

||||||

| | [Custom Prompt](https://qodo-merge-docs.qodo.ai/tools/custom_prompt/) 💎 | ✅ | ✅ | ✅ | | |

|

|

||||||

| | [Generate Custom Labels](https://qodo-merge-docs.qodo.ai/tools/custom_labels/) 💎 | ✅ | ✅ | | | |

|

|

||||||

| | [Generate Tests](https://qodo-merge-docs.qodo.ai/tools/test/) 💎 | ✅ | ✅ | | | |

|

|

||||||

| | [Implement](https://qodo-merge-docs.qodo.ai/tools/implement/) 💎 | ✅ | ✅ | ✅ | | |

|

|

||||||

| | [Scan Repo Discussions](https://qodo-merge-docs.qodo.ai/tools/scan_repo_discussions/) 💎 | ✅ | | | | |

|

|

||||||

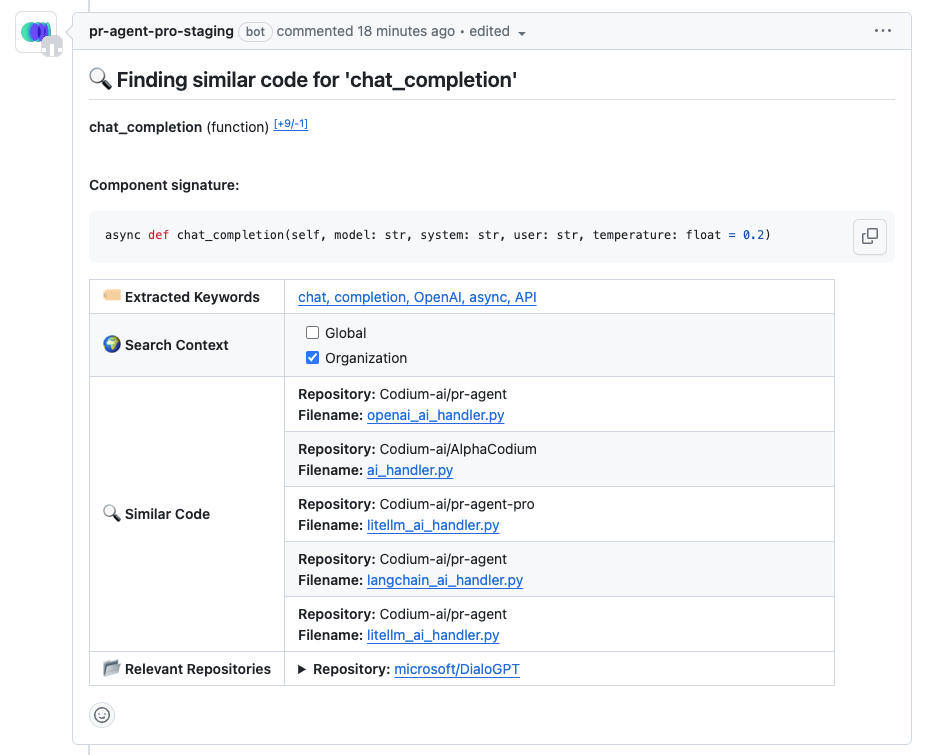

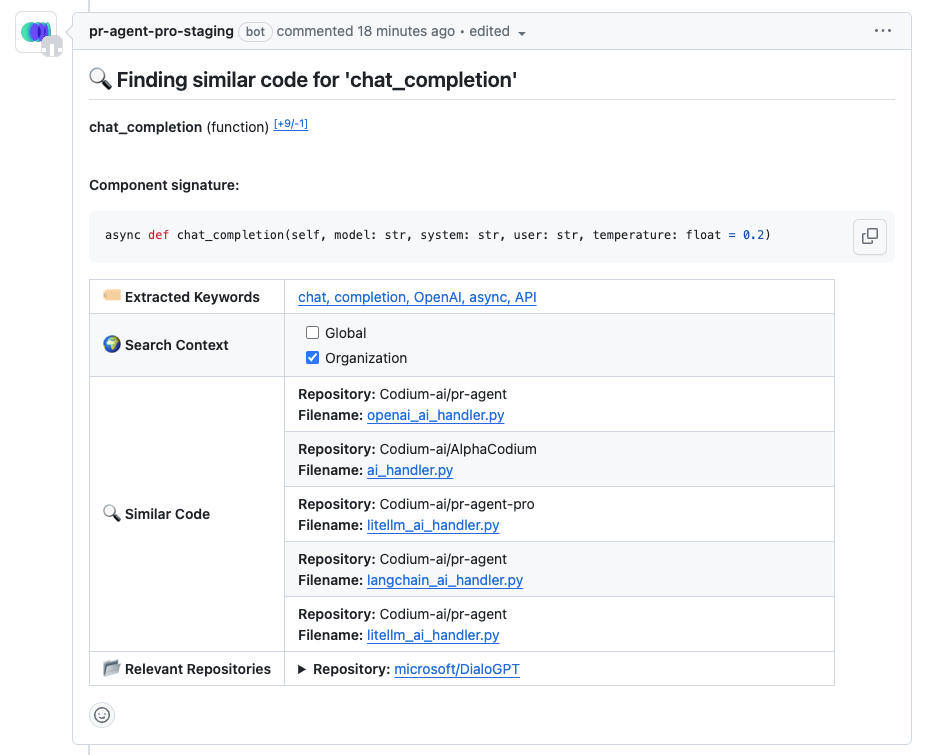

| | [Similar Code](https://qodo-merge-docs.qodo.ai/tools/similar_code/) 💎 | ✅ | | | | |

|

|

||||||

| | [Ticket Context](https://qodo-merge-docs.qodo.ai/core-abilities/fetching_ticket_context/) 💎 | ✅ | ✅ | ✅ | | |

|

|

||||||

| | [Utilizing Best Practices](https://qodo-merge-docs.qodo.ai/tools/improve/#best-practices) 💎 | ✅ | ✅ | ✅ | | |

|

|

||||||

| | [PR Chat](https://qodo-merge-docs.qodo.ai/chrome-extension/features/#pr-chat) 💎 | ✅ | | | | |

|

|

||||||

| | [Suggestion Tracking](https://qodo-merge-docs.qodo.ai/tools/improve/#suggestion-tracking) 💎 | ✅ | ✅ | | | |

|

|

||||||

| | | | | | | |

|

|

||||||

| [USAGE](https://qodo-merge-docs.qodo.ai/usage-guide/) | [CLI](https://qodo-merge-docs.qodo.ai/usage-guide/automations_and_usage/#local-repo-cli) | ✅ | ✅ | ✅ | ✅ | ✅ |

|

|

||||||

| | [App / webhook](https://qodo-merge-docs.qodo.ai/usage-guide/automations_and_usage/#github-app) | ✅ | ✅ | ✅ | ✅ | ✅ |

|

|

||||||

| | [Tagging bot](https://github.com/Codium-ai/pr-agent#try-it-now) | ✅ | | | | |

|

|

||||||

| | [Actions](https://qodo-merge-docs.qodo.ai/installation/github/#run-as-a-github-action) | ✅ | ✅ | ✅ | ✅ | |

|

|

||||||

| | | | | | | |

|

|

||||||

| [CORE](https://qodo-merge-docs.qodo.ai/core-abilities/) | [Adaptive and token-aware file patch fitting](https://qodo-merge-docs.qodo.ai/core-abilities/compression_strategy/) | ✅ | ✅ | ✅ | ✅ | |

|

|

||||||

| | [Auto Best Practices 💎](https://qodo-merge-docs.qodo.ai/core-abilities/auto_best_practices/) | ✅ | | | | |

|

|

||||||

| | [Chat on code suggestions](https://qodo-merge-docs.qodo.ai/core-abilities/chat_on_code_suggestions/) | ✅ | ✅ | | | |

|

|

||||||

| | [Code Validation 💎](https://qodo-merge-docs.qodo.ai/core-abilities/code_validation/) | ✅ | ✅ | ✅ | ✅ | |

|

|

||||||

| | [Dynamic context](https://qodo-merge-docs.qodo.ai/core-abilities/dynamic_context/) | ✅ | ✅ | ✅ | ✅ | |

|

|

||||||

| | [Fetching ticket context](https://qodo-merge-docs.qodo.ai/core-abilities/fetching_ticket_context/) | ✅ | ✅ | ✅ | | |

|

|

||||||

| | [Global and wiki configurations](https://qodo-merge-docs.qodo.ai/usage-guide/configuration_options/) 💎 | ✅ | ✅ | ✅ | | |

|

|

||||||

| | [Impact Evaluation](https://qodo-merge-docs.qodo.ai/core-abilities/impact_evaluation/) 💎 | ✅ | ✅ | | | |

|

|

||||||

| | [Incremental Update](https://qodo-merge-docs.qodo.ai/core-abilities/incremental_update/) | ✅ | | | | |

|

|

||||||

| | [Interactivity](https://qodo-merge-docs.qodo.ai/core-abilities/interactivity/) | ✅ | ✅ | | | |

|

|

||||||

| | [Local and global metadata](https://qodo-merge-docs.qodo.ai/core-abilities/metadata/) | ✅ | ✅ | ✅ | ✅ | |

|

|

||||||

| | [Multiple models support](https://qodo-merge-docs.qodo.ai/usage-guide/changing_a_model/) | ✅ | ✅ | ✅ | ✅ | |

|

|

||||||

| | [PR compression](https://qodo-merge-docs.qodo.ai/core-abilities/compression_strategy/) | ✅ | ✅ | ✅ | ✅ | |

|

|

||||||

| | [PR interactive actions](https://www.qodo.ai/images/pr_agent/pr-actions.mp4) 💎 | ✅ | ✅ | | | |

|

|

||||||

| | [RAG context enrichment](https://qodo-merge-docs.qodo.ai/core-abilities/rag_context_enrichment/) | ✅ | | ✅ | | |

|

|

||||||

| | [Self reflection](https://qodo-merge-docs.qodo.ai/core-abilities/self_reflection/) | ✅ | ✅ | ✅ | ✅ | |

|

|

||||||

| | [Static code analysis](https://qodo-merge-docs.qodo.ai/core-abilities/static_code_analysis/) 💎 | ✅ | ✅ | | | |

|

|

||||||

- 💎 means this feature is available only in [Qodo Merge](https://www.qodo.ai/pricing/)

|

|

||||||

|

|

||||||

[//]: # (- Support for additional git providers is described in [here](./docs/Full_environments.md))

|

|

||||||

___

|

|

||||||

|

|

||||||

## See It in Action

|

|

||||||

|

|

||||||

</div>

|

|

||||||

<h4><a href="https://github.com/Codium-ai/pr-agent/pull/530">/describe</a></h4>

|

|

||||||

<div align="center">

|

|

||||||

<p float="center">

|

|

||||||

<img src="https://www.codium.ai/images/pr_agent/describe_new_short_main.png" width="512">

|

|

||||||

</p>

|

|

||||||

</div>

|

|

||||||

<hr>

|

|

||||||

|

|

||||||

<h4><a href="https://github.com/Codium-ai/pr-agent/pull/732#issuecomment-1975099151">/review</a></h4>

|

|

||||||

<div align="center">

|

|

||||||

<p float="center">

|

|

||||||

<kbd>

|

|

||||||

<img src="https://www.codium.ai/images/pr_agent/review_new_short_main.png" width="512">

|

|

||||||

</kbd>

|

|

||||||

</p>

|

|

||||||

</div>

|

|

||||||

<hr>

|

|

||||||

|

|

||||||

<h4><a href="https://github.com/Codium-ai/pr-agent/pull/732#issuecomment-1975099159">/improve</a></h4>

|

|

||||||

<div align="center">

|

|

||||||

<p float="center">

|

|

||||||

<kbd>

|

|

||||||

<img src="https://www.codium.ai/images/pr_agent/improve_new_short_main.png" width="512">

|

|

||||||

</kbd>

|

|

||||||

</p>

|

|

||||||

</div>

|

|

||||||

|

|

||||||

<div align="left">

|

|

||||||

|

|

||||||

</div>

|

|

||||||

<hr>

|

|

||||||

|

|

||||||

## Try It Now

|

|

||||||

|

|

||||||

Try the Claude Sonnet powered PR-Agent instantly on _your public GitHub repository_. Just mention `@CodiumAI-Agent` and add the desired command in any PR comment. The agent will generate a response based on your command.

|

|

||||||

For example, add a comment to any pull request with the following text:

|

|

||||||

|

|

||||||

```

|

|

||||||

@CodiumAI-Agent /review

|

|

||||||

```

|

|

||||||

|

|

||||||

and the agent will respond with a review of your PR.

|

|

||||||

|

|

||||||

Note that this is a promotional bot, suitable only for initial experimentation.

|

|

||||||

It does not have 'edit' access to your repo, for example, so it cannot update the PR description or add labels (`@CodiumAI-Agent /describe` will publish PR description as a comment). In addition, the bot cannot be used on private repositories, as it does not have access to the files there.

|

|

||||||

|

|

||||||

---

|

---

|

||||||

|

|

||||||

## Qodo Merge 💎

|

## ✨ Advanced Features in Qodo

|

||||||

|

|

||||||

[Qodo Merge](https://www.qodo.ai/pricing/) is a hosted version of PR-Agent, provided by Qodo. It is available for a monthly fee, and provides the following benefits:

|

### 🧭 PR → Ticket Automation

|

||||||

|

Seamlessly links pull requests to your project tracking system for end-to-end visibility.

|

||||||

|

|

||||||

1. **Fully managed** - We take care of everything for you - hosting, models, regular updates, and more. Installation is as simple as signing up and adding the Qodo Merge app to your GitHub/GitLab/BitBucket repo.

|

### ✅ Auto Best Practices

|

||||||

2. **Improved privacy** - No data will be stored or used to train models. Qodo Merge will employ zero data retention, and will use an OpenAI account with zero data retention.

|

Learns your team’s standards and automatically enforces them during code reviews.

|

||||||

3. **Improved support** - Qodo Merge users will receive priority support, and will be able to request new features and capabilities.

|

|

||||||

4. **Extra features** - In addition to the benefits listed above, Qodo Merge will emphasize more customization, and the usage of static code analysis, in addition to LLM logic, to improve results.

|

|

||||||

See [here](https://qodo-merge-docs.qodo.ai/overview/pr_agent_pro/) for a list of features available in Qodo Merge.

|

|

||||||

|

|

||||||

## How It Works

|

### 🧪 Code Validation

|

||||||

|

Performs advanced static and semantic analysis to catch issues before merge.

|

||||||

|

|

||||||

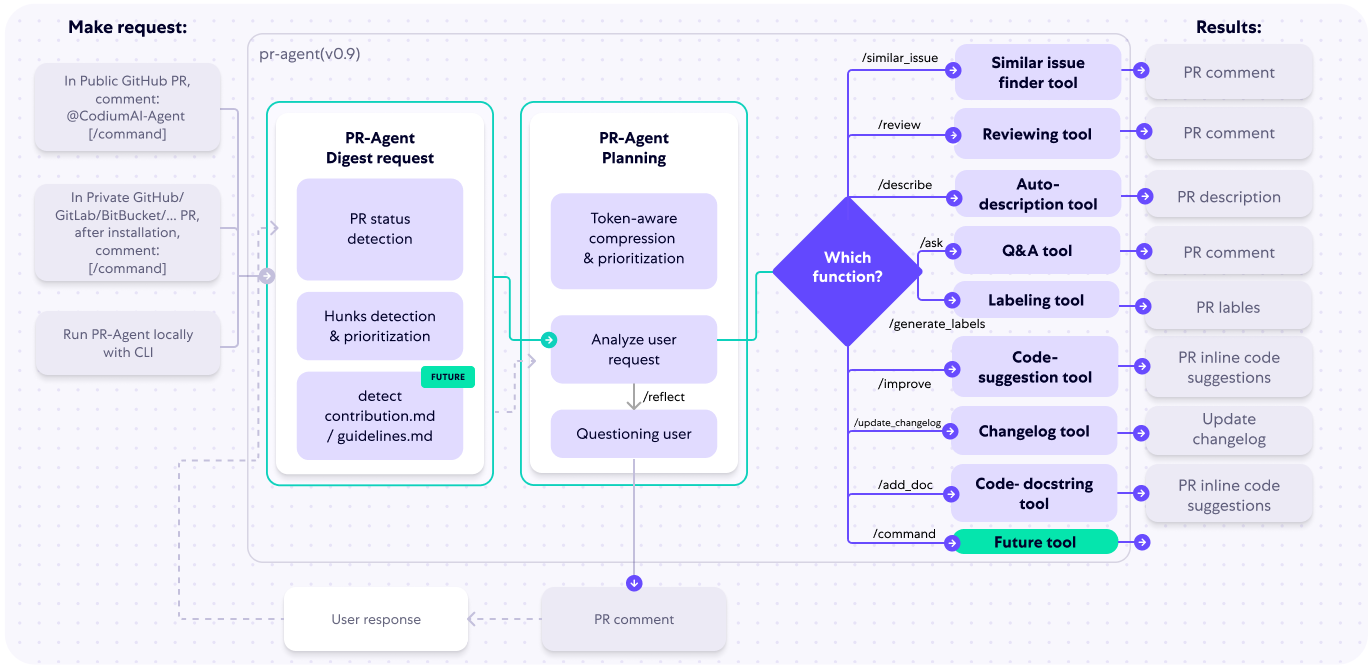

The following diagram illustrates PR-Agent tools and their flow:

|

### 💬 PR Chat Interface

|

||||||

|

Lets you converse with your PR to explain, summarize, or suggest improvements instantly.

|

||||||

|

|

||||||

|

### 🔍 Impact Evaluation

|

||||||

|

Analyzes the business and technical effect of each change before approval.

|

||||||

|

|

||||||

Check out the [PR Compression strategy](https://qodo-merge-docs.qodo.ai/core-abilities/#pr-compression-strategy) page for more details on how we convert a code diff to a manageable LLM prompt

|

---

|

||||||

|

|

||||||

## Why Use PR-Agent?

|

## ❤️ Community

|

||||||

|

This open-source release remains here as a community contribution from Qodo — the origin of modern AI-powered code collaboration.

|

||||||

A reasonable question that can be asked is: `"Why use PR-Agent? What makes it stand out from existing tools?"`

|

We’re proud to share it and inspire developers worldwide.

|

||||||

|

|

||||||

Here are some advantages of PR-Agent:

|

|

||||||

|

|

||||||

- We emphasize **real-life practical usage**. Each tool (review, improve, ask, ...) has a single LLM call, no more. We feel that this is critical for realistic team usage - obtaining an answer quickly (~30 seconds) and affordably.

|

|

||||||

- Our [PR Compression strategy](https://qodo-merge-docs.qodo.ai/core-abilities/#pr-compression-strategy) is a core ability that enables to effectively tackle both short and long PRs.

|

|

||||||

- Our JSON prompting strategy enables us to have **modular, customizable tools**. For example, the '/review' tool categories can be controlled via the [configuration](pr_agent/settings/configuration.toml) file. Adding additional categories is easy and accessible.

|

|

||||||

- We support **multiple git providers** (GitHub, GitLab, BitBucket), **multiple ways** to use the tool (CLI, GitHub Action, GitHub App, Docker, ...), and **multiple models** (GPT, Claude, Deepseek, ...)

|

|

||||||

|

|

||||||

## Data Privacy

|

|

||||||

|

|

||||||

### Self-hosted PR-Agent

|

|

||||||

|

|

||||||

- If you host PR-Agent with your OpenAI API key, it is between you and OpenAI. You can read their API data privacy policy here:

|

|

||||||

https://openai.com/enterprise-privacy

|

|

||||||

|

|

||||||

### Qodo-hosted Qodo Merge 💎

|

|

||||||

|

|

||||||

- When using Qodo Merge 💎, hosted by Qodo, we will not store any of your data, nor will we use it for training. You will also benefit from an OpenAI account with zero data retention.

|

|

||||||

|

|

||||||

- For certain clients, Qodo-hosted Qodo Merge will use Qodo’s proprietary models — if this is the case, you will be notified.

|

|

||||||

|

|

||||||

- No passive collection of Code and Pull Requests’ data — Qodo Merge will be active only when you invoke it, and it will then extract and analyze only data relevant to the executed command and queried pull request.

|

|

||||||

|

|

||||||

### Qodo Merge Chrome extension

|

|

||||||

|

|

||||||

- The [Qodo Merge Chrome extension](https://chromewebstore.google.com/detail/qodo-merge-ai-powered-cod/ephlnjeghhogofkifjloamocljapahnl) serves solely to modify the visual appearance of a GitHub PR screen. It does not transmit any user's repo or pull request code. Code is only sent for processing when a user submits a GitHub comment that activates a PR-Agent tool, in accordance with the standard privacy policy of Qodo-Merge.

|

|

||||||

|

|

||||||

## Contributing

|

|

||||||

|

|

||||||

To contribute to the project, get started by reading our [Contributing Guide](https://github.com/qodo-ai/pr-agent/blob/b09eec265ef7d36c232063f76553efb6b53979ff/CONTRIBUTING.md).

|

|

||||||

|

|

||||||

## Links

|

|

||||||

|

|

||||||

- Discord community: https://discord.com/invite/SgSxuQ65GF

|

|

||||||

- Qodo site: https://www.qodo.ai/

|

|

||||||

- Blog: https://www.qodo.ai/blog/

|

|

||||||

- Troubleshooting: https://www.qodo.ai/blog/technical-faq-and-troubleshooting/

|

|

||||||

- Support: support@qodo.ai

|

|

||||||

|

|

|

||||||

|

|

@ -59,6 +59,6 @@ steps:

|

||||||

|

|

||||||

We take the security of PR-Agent seriously. If you discover a security vulnerability, please report it immediately to:

|

We take the security of PR-Agent seriously. If you discover a security vulnerability, please report it immediately to:

|

||||||

|

|

||||||

Email: tal.r@qodo.ai

|

Email: security@qodo.ai

|

||||||

|

|

||||||

Please include a description of the vulnerability, steps to reproduce, and the affected PR-Agent version.

|

Please include a description of the vulnerability, steps to reproduce, and the affected PR-Agent version.

|

||||||

|

|

|

||||||

5

docs/docs/.gitbook.yaml

Normal file

5

docs/docs/.gitbook.yaml

Normal file

|

|

@ -0,0 +1,5 @@

|

||||||

|

root: ./

|

||||||

|

|

||||||

|

structure:

|

||||||

|

readme: ../README.md

|

||||||

|

summary: ./summary.md

|

||||||

|

|

@ -19,7 +19,6 @@

|

||||||

</div>

|

</div>

|

||||||

|

|

||||||

<style>

|

<style>

|

||||||

Untitled

|

|

||||||

.search-section {

|

.search-section {

|

||||||

max-width: 800px;

|

max-width: 800px;

|

||||||

margin: 0 auto;

|

margin: 0 auto;

|

||||||

|

|

@ -202,7 +201,23 @@ h1 {

|

||||||

|

|

||||||

<script>

|

<script>

|

||||||

window.addEventListener('load', function() {

|

window.addEventListener('load', function() {

|

||||||

function displayResults(responseText) {

|

function extractText(responseText) {

|

||||||

|

try {

|

||||||

|

console.log('responseText: ', responseText);

|

||||||

|

const results = JSON.parse(responseText);

|

||||||

|

const msg = results.message;

|

||||||

|

|

||||||

|

if (!msg || msg.trim() === '') {

|

||||||

|

return "No results found";

|

||||||

|

}

|

||||||

|

return msg;

|

||||||

|

} catch (error) {

|

||||||

|

console.error('Error parsing results:', error);

|

||||||

|

throw new Error("Failed parsing response message");

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

function displayResults(msg) {

|

||||||

const resultsContainer = document.getElementById('results');

|

const resultsContainer = document.getElementById('results');

|

||||||

const spinner = document.getElementById('spinner');

|

const spinner = document.getElementById('spinner');

|

||||||

const searchContainer = document.querySelector('.search-container');

|

const searchContainer = document.querySelector('.search-container');

|

||||||

|

|

@ -214,8 +229,6 @@ window.addEventListener('load', function() {

|

||||||

searchContainer.scrollIntoView({ behavior: 'smooth', block: 'start' });

|

searchContainer.scrollIntoView({ behavior: 'smooth', block: 'start' });

|

||||||

|

|

||||||

try {

|

try {

|

||||||

const results = JSON.parse(responseText);

|

|

||||||

|

|

||||||

marked.setOptions({

|

marked.setOptions({

|

||||||

breaks: true,

|

breaks: true,

|

||||||

gfm: true,

|

gfm: true,

|

||||||

|

|

@ -223,7 +236,7 @@ window.addEventListener('load', function() {

|

||||||

sanitize: false

|

sanitize: false

|

||||||

});

|

});

|

||||||

|

|

||||||

const htmlContent = marked.parse(results.message);

|

const htmlContent = marked.parse(msg);

|

||||||

|

|

||||||

resultsContainer.className = 'markdown-content';

|

resultsContainer.className = 'markdown-content';

|

||||||

resultsContainer.innerHTML = htmlContent;

|

resultsContainer.innerHTML = htmlContent;

|

||||||

|

|

@ -242,7 +255,7 @@ window.addEventListener('load', function() {

|

||||||

}, 100);

|

}, 100);

|

||||||

} catch (error) {

|

} catch (error) {

|

||||||

console.error('Error parsing results:', error);

|

console.error('Error parsing results:', error);

|

||||||

resultsContainer.innerHTML = '<div class="error-message">Error processing results</div>';

|

resultsContainer.innerHTML = '<div class="error-message">Cannot process results</div>';

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

|

|

@ -275,24 +288,24 @@ window.addEventListener('load', function() {

|

||||||

body: JSON.stringify(data)

|

body: JSON.stringify(data)

|

||||||

};

|

};

|

||||||

|

|

||||||

// const API_ENDPOINT = 'http://0.0.0.0:3000/api/v1/docs_help';

|

//const API_ENDPOINT = 'http://0.0.0.0:3000/api/v1/docs_help';

|

||||||

const API_ENDPOINT = 'https://help.merge.qodo.ai/api/v1/docs_help';

|

const API_ENDPOINT = 'https://help.merge.qodo.ai/api/v1/docs_help';

|

||||||

|

|

||||||

const response = await fetch(API_ENDPOINT, options);

|

const response = await fetch(API_ENDPOINT, options);

|

||||||

|

const responseText = await response.text();

|

||||||

|

const msg = extractText(responseText);

|

||||||

|

|

||||||

if (!response.ok) {

|

if (!response.ok) {

|

||||||

throw new Error(`HTTP error! status: ${response.status}`);

|

throw new Error(`An error (${response.status}) occurred during search: "${msg}"`);

|

||||||

}

|

}

|

||||||

|

|

||||||

const responseText = await response.text();

|

displayResults(msg);

|

||||||

displayResults(responseText);

|

|

||||||

} catch (error) {

|

} catch (error) {

|

||||||

spinner.style.display = 'none';

|

spinner.style.display = 'none';

|

||||||

resultsContainer.innerHTML = `

|

const errorDiv = document.createElement('div');

|

||||||

<div class="error-message">

|

errorDiv.className = 'error-message';

|

||||||

An error occurred while searching. Please try again later.

|

errorDiv.textContent = error instanceof Error ? error.message : String(error);

|

||||||

</div>

|

resultsContainer.replaceChildren(errorDiv);

|

||||||

`;

|

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

|

|

|

||||||

|

|

@ -1,8 +1,10 @@

|

||||||

|

`Platforms supported: GitHub Cloud`

|

||||||

|

|

||||||

[Qodo Merge Chrome extension](https://chromewebstore.google.com/detail/pr-agent-chrome-extension/ephlnjeghhogofkifjloamocljapahnl){:target="_blank"} is a collection of tools that integrates seamlessly with your GitHub environment, aiming to enhance your Git usage experience, and providing AI-powered capabilities to your PRs.

|

[Qodo Merge Chrome extension](https://chromewebstore.google.com/detail/pr-agent-chrome-extension/ephlnjeghhogofkifjloamocljapahnl){:target="_blank"} is a collection of tools that integrates seamlessly with your GitHub environment, aiming to enhance your Git usage experience, and providing AI-powered capabilities to your PRs.

|

||||||

|

|

||||||

With a single-click installation you will gain access to a context-aware chat on your pull requests code, a toolbar extension with multiple AI feedbacks, Qodo Merge filters, and additional abilities.

|

With a single-click installation you will gain access to a context-aware chat on your pull requests code, a toolbar extension with multiple AI feedbacks, Qodo Merge filters, and additional abilities.

|

||||||

|

|

||||||

The extension is powered by top code models like Claude 3.7 Sonnet and o4-mini. All the extension's features are free to use on public repositories.

|

The extension is powered by top code models like GPT-5. All the extension's features are free to use on public repositories.

|

||||||

|

|

||||||

For private repositories, you will need to install [Qodo Merge](https://github.com/apps/qodo-merge-pro){:target="_blank"} in addition to the extension.

|

For private repositories, you will need to install [Qodo Merge](https://github.com/apps/qodo-merge-pro){:target="_blank"} in addition to the extension.

|

||||||

For a demonstration of how to install Qodo Merge and use it with the Chrome extension, please refer to the tutorial video at the provided [link](https://codium.ai/images/pr_agent/private_repos.mp4){:target="_blank"}.

|

For a demonstration of how to install Qodo Merge and use it with the Chrome extension, please refer to the tutorial video at the provided [link](https://codium.ai/images/pr_agent/private_repos.mp4){:target="_blank"}.

|

||||||

|

|

@ -12,3 +14,101 @@ For a demonstration of how to install Qodo Merge and use it with the Chrome exte

|

||||||

### Supported browsers

|

### Supported browsers

|

||||||

|

|

||||||

The extension is supported on all Chromium-based browsers, including Google Chrome, Arc, Opera, Brave, and Microsoft Edge.

|

The extension is supported on all Chromium-based browsers, including Google Chrome, Arc, Opera, Brave, and Microsoft Edge.

|

||||||

|

|

||||||

|

## Features

|

||||||

|

|

||||||

|

### PR chat

|

||||||

|

|

||||||

|

The PR-Chat feature allows to freely chat with your PR code, within your GitHub environment.

|

||||||

|

It will seamlessly use the PR as context to your chat session, and provide AI-powered feedback.

|

||||||

|

|

||||||

|

To enable private chat, simply install the Qodo Merge Chrome extension. After installation, each PR's file-changed tab will include a chat box, where you may ask questions about your code.

|

||||||

|

This chat session is **private**, and won't be visible to other users.

|

||||||

|

|

||||||

|

All open-source repositories are supported.

|

||||||

|

For private repositories, you will also need to install Qodo Merge. After installation, make sure to open at least one new PR to fully register your organization. Once done, you can chat with both new and existing PRs across all installed repositories.

|

||||||

|

|

||||||

|

#### Context-aware PR chat

|

||||||

|

|

||||||

|

Qodo Merge constructs a comprehensive context for each pull request, incorporating the PR description, commit messages, and code changes with extended dynamic context. This contextual information, along with additional PR-related data, forms the foundation for an AI-powered chat session. The agent then leverages this rich context to provide intelligent, tailored responses to user inquiries about the pull request.

|

||||||

|

|

||||||

|

<img src="https://codium.ai/images/pr_agent/pr_chat_1.png" width="768">

|

||||||

|

<img src="https://codium.ai/images/pr_agent/pr_chat_2.png" width="768">

|

||||||

|

|

||||||

|

### Toolbar extension

|

||||||

|

|

||||||

|

With Qodo Merge Chrome extension, it's [easier than ever](https://www.youtube.com/watch?v=gT5tli7X4H4) to interactively configure and experiment with the different tools and configuration options.

|

||||||

|

|

||||||

|

For private repositories, after you found the setup that works for you, you can also easily export it as a persistent configuration file, and use it for automatic commands.

|

||||||

|

|

||||||

|

<img src="https://codium.ai/images/pr_agent/toolbar1.png" width="512">

|

||||||

|

|

||||||

|

<img src="https://codium.ai/images/pr_agent/toolbar2.png" width="512">

|

||||||

|

|

||||||

|

### Qodo Merge filters

|

||||||

|

|

||||||

|

Qodo Merge filters is a sidepanel option. that allows you to filter different message in the conversation tab.

|

||||||

|

|

||||||

|

For example, you can choose to present only message from Qodo Merge, or filter those messages, focusing only on user's comments.

|

||||||

|

|

||||||

|

<img src="https://codium.ai/images/pr_agent/pr_agent_filters1.png" width="256">

|

||||||

|

|

||||||

|

<img src="https://codium.ai/images/pr_agent/pr_agent_filters2.png" width="256">

|

||||||

|

|

||||||

|

### Enhanced code suggestions

|

||||||

|

|

||||||

|

Qodo Merge Chrome extension adds the following capabilities to code suggestions tool's comments:

|

||||||

|

|

||||||

|

- Auto-expand the table when you are viewing a code block, to avoid clipping.

|

||||||

|

- Adding a "quote-and-reply" button, that enables to address and comment on a specific suggestion (for example, asking the author to fix the issue)

|

||||||

|

|

||||||

|

<img src="https://codium.ai/images/pr_agent/chrome_extension_code_suggestion1.png" width="512">

|

||||||

|

|

||||||

|

<img src="https://codium.ai/images/pr_agent/chrome_extension_code_suggestion2.png" width="512">

|

||||||

|

|

||||||

|

## Data Privacy

|

||||||

|

|

||||||

|

We take your code's security and privacy seriously:

|

||||||

|

|

||||||

|

- The Chrome extension will not send your code to any external servers.

|

||||||

|

- For private repositories, we will first validate the user's identity and permissions. After authentication, we generate responses using the existing Qodo Merge integration.

|

||||||

|

|

||||||

|

## Options and Configurations

|

||||||

|

|

||||||

|

### Accessing the Options Page

|

||||||

|

|

||||||

|

To access the options page for the Qodo Merge Chrome extension:

|

||||||

|

|

||||||

|

1. Find the extension icon in your Chrome toolbar (usually in the top-right corner of your browser)

|

||||||

|

2. Right-click on the extension icon

|

||||||

|

3. Select "Options" from the context menu that appears

|

||||||

|

|

||||||

|

Alternatively, you can access the options page directly using this URL:

|

||||||

|

|

||||||

|

[chrome-extension://ephlnjeghhogofkifjloamocljapahnl/options.html](chrome-extension://ephlnjeghhogofkifjloamocljapahnl/options.html)

|

||||||

|

|

||||||

|

<img src="https://codium.ai/images/pr_agent/chrome_ext_options.png" width="256">

|

||||||

|

|

||||||

|

### Configuration Options

|

||||||

|

|

||||||

|

<img src="https://codium.ai/images/pr_agent/chrome_ext_settings_page.png" width="512">

|

||||||

|

|

||||||

|

#### API Base Host

|

||||||

|

|

||||||

|

For single-tenant customers, you can configure the extension to communicate directly with your company's Qodo Merge server instance.

|

||||||

|

|

||||||

|

To set this up:

|

||||||

|

|

||||||

|

- Enter your organization's Qodo Merge API endpoint in the "API Base Host" field

|

||||||

|

- This endpoint should be provided by your Qodo DevOps Team

|

||||||

|

|

||||||

|

*Note: The extension does not send your code to the server, but only triggers your previously installed Qodo Merge application.*

|

||||||

|

|

||||||

|

#### Interface Options

|

||||||

|

|

||||||

|

You can customize the extension's interface by:

|

||||||

|

|

||||||

|

- Toggling the "Show Qodo Merge Toolbar" option

|

||||||

|

- When disabled, the toolbar will not appear in your Github comment bar

|

||||||

|

|

||||||

|

Remember to click "Save Settings" after making any changes.

|

||||||

|

|

@ -41,13 +41,9 @@ When presenting the suggestions generated by the `improve` tool, Qodo Merge will

|

||||||

|

|

||||||

Teams and companies can also manually define their own [custom best practices](https://qodo-merge-docs.qodo.ai/tools/improve/#best-practices) in Qodo Merge.

|

Teams and companies can also manually define their own [custom best practices](https://qodo-merge-docs.qodo.ai/tools/improve/#best-practices) in Qodo Merge.

|

||||||

|

|

||||||

When custom best practices exist, Qodo Merge will still generate an 'auto best practices' wiki file, though it won't be used by the `improve` tool.

|

When custom best practices exist, Qodo Merge will use both the auto-generated best practices and your custom best practices together. The auto best practices file provides additional insights derived from suggestions your team found valuable enough to implement, while also demonstrating effective patterns for writing AI-friendly best practices.

|

||||||

However, this auto-generated file can still serve two valuable purposes:

|

|

||||||

|

|

||||||

1. It can help enhance your custom best practices with additional insights derived from suggestions your team found valuable enough to implement

|

We recommend utilizing both auto and custom best practices to get the most comprehensive code improvement suggestions for your team.

|

||||||

2. It demonstrates effective patterns for writing AI-friendly best practices

|

|

||||||

|

|

||||||

Even when using custom best practices, we recommend regularly reviewing the auto best practices file to refine your custom rules.

|

|

||||||

|

|

||||||

## Relevant configurations

|

## Relevant configurations

|

||||||

|

|

||||||

|

|

|

||||||

|

|

@ -9,9 +9,10 @@ This integration enriches the review process by automatically surfacing relevant

|

||||||

|

|

||||||

**Ticket systems supported**:

|

**Ticket systems supported**:

|

||||||

|

|

||||||

- [GitHub](https://qodo-merge-docs.qodo.ai/core-abilities/fetching_ticket_context/#github-issues-integration)

|

- [GitHub/Gitlab Issues](https://qodo-merge-docs.qodo.ai/core-abilities/fetching_ticket_context/#githubgitlab-issues-integration)

|

||||||

- [Jira (💎)](https://qodo-merge-docs.qodo.ai/core-abilities/fetching_ticket_context/#jira-integration)

|

- [Jira (💎)](https://qodo-merge-docs.qodo.ai/core-abilities/fetching_ticket_context/#jira-integration)

|

||||||

- [Linear (💎)](https://qodo-merge-docs.qodo.ai/core-abilities/fetching_ticket_context/#linear-integration)

|

- [Linear (💎)](https://qodo-merge-docs.qodo.ai/core-abilities/fetching_ticket_context/#linear-integration)

|

||||||

|

- [Monday (💎)](https://qodo-merge-docs.qodo.ai/core-abilities/fetching_ticket_context/#monday-integration)

|

||||||

|

|

||||||

**Ticket data fetched:**

|

**Ticket data fetched:**

|

||||||

|

|

||||||

|

|

@ -53,17 +54,17 @@ A `PR Code Verified` label indicates the PR code meets ticket requirements, but

|

||||||

|

|

||||||

#### Configuration options

|

#### Configuration options

|

||||||

|

|

||||||

-

|

-

|

||||||

|

|

||||||

By default, the tool will automatically validate if the PR complies with the referenced ticket.

|

By default, the `review` tool will automatically validate if the PR complies with the referenced ticket.

|

||||||

If you want to disable this feedback, add the following line to your configuration file:

|

If you want to disable this feedback, add the following line to your configuration file:

|

||||||

|

|

||||||

```toml

|

```toml

|

||||||

[pr_reviewer]

|

[pr_reviewer]

|

||||||

require_ticket_analysis_review=false

|

require_ticket_analysis_review=false

|

||||||

```

|

```

|

||||||

|

|

||||||

-

|

-

|

||||||

|

|

||||||

If you set:

|

If you set:

|

||||||

```toml

|

```toml

|

||||||

|

|

@ -71,18 +72,48 @@ A `PR Code Verified` label indicates the PR code meets ticket requirements, but

|

||||||

check_pr_additional_content=true

|

check_pr_additional_content=true

|

||||||

```

|

```

|

||||||

(default: `false`)

|

(default: `false`)

|

||||||

|

|

||||||

the `review` tool will also validate that the PR code doesn't contain any additional content that is not related to the ticket. If it does, the PR will be labeled at best as `PR Code Verified`, and the `review` tool will provide a comment with the additional unrelated content found in the PR code.

|

the `review` tool will also validate that the PR code doesn't contain any additional content that is not related to the ticket. If it does, the PR will be labeled at best as `PR Code Verified`, and the `review` tool will provide a comment with the additional unrelated content found in the PR code.

|

||||||

|

|

||||||

## GitHub Issues Integration

|

### Compliance tool

|

||||||

|

|

||||||

Qodo Merge will automatically recognize GitHub issues mentioned in the PR description and fetch the issue content.

|

The `compliance` tool also uses ticket context to validate that PR changes fulfill the requirements specified in linked tickets.

|

||||||

Examples of valid GitHub issue references:

|

|

||||||

|

|

||||||

- `https://github.com/<ORG_NAME>/<REPO_NAME>/issues/<ISSUE_NUMBER>`

|

#### Configuration options

|

||||||

|

|

||||||

|

-

|

||||||

|

|

||||||

|

By default, the `compliance` tool will automatically validate if the PR complies with the referenced ticket.

|

||||||

|

If you want to disable ticket compliance checking in the compliance tool, add the following line to your configuration file:

|

||||||

|

|

||||||

|

```toml

|

||||||

|

[pr_compliance]

|

||||||

|

require_ticket_analysis_review=false

|

||||||

|

```

|

||||||

|

|

||||||

|

-

|

||||||

|

|

||||||

|

If you set:

|

||||||

|

```toml

|

||||||

|

[pr_compliance]

|

||||||

|

check_pr_additional_content=true

|

||||||

|

```

|

||||||

|

(default: `false`)

|

||||||

|

|

||||||

|

the `compliance` tool will also validate that the PR code doesn't contain any additional content that is not related to the ticket.

|

||||||

|

|

||||||

|

## GitHub/Gitlab Issues Integration

|

||||||

|

|

||||||

|

Qodo Merge will automatically recognize GitHub/Gitlab issues mentioned in the PR description and fetch the issue content.

|

||||||

|

Examples of valid GitHub/Gitlab issue references:

|

||||||

|

|

||||||

|

- `https://github.com/<ORG_NAME>/<REPO_NAME>/issues/<ISSUE_NUMBER>` or `https://gitlab.com/<ORG_NAME>/<REPO_NAME>/-/issues/<ISSUE_NUMBER>`

|

||||||

- `#<ISSUE_NUMBER>`

|

- `#<ISSUE_NUMBER>`

|

||||||

- `<ORG_NAME>/<REPO_NAME>#<ISSUE_NUMBER>`

|

- `<ORG_NAME>/<REPO_NAME>#<ISSUE_NUMBER>`

|

||||||

|

|

||||||

|

Branch names can also be used to link issues, for example:

|

||||||

|

- `123-fix-bug` (where `123` is the issue number)

|

||||||

|

|

||||||

Since Qodo Merge is integrated with GitHub, it doesn't require any additional configuration to fetch GitHub issues.

|

Since Qodo Merge is integrated with GitHub, it doesn't require any additional configuration to fetch GitHub issues.

|

||||||

|

|

||||||

## Jira Integration 💎

|

## Jira Integration 💎

|

||||||

|

|

@ -104,7 +135,7 @@ Installation steps:

|

||||||

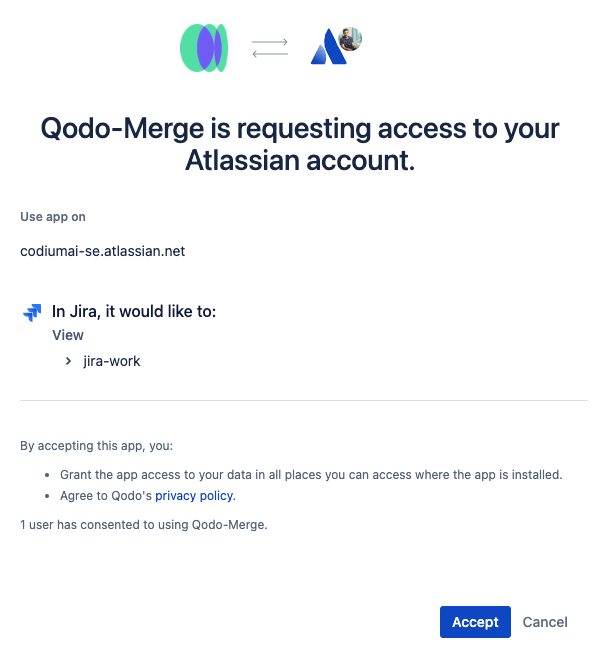

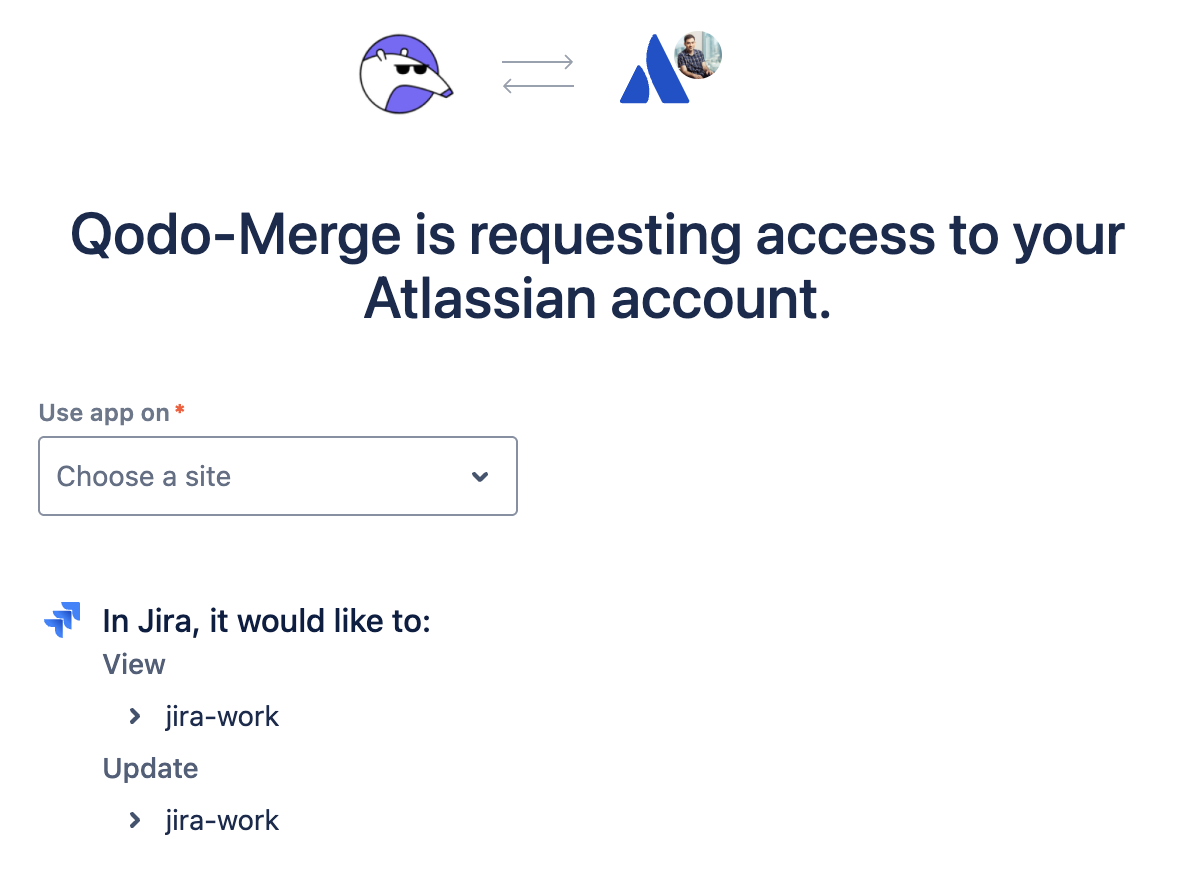

2. Click on the Connect **Jira Cloud** button to connect the Jira Cloud app

|

2. Click on the Connect **Jira Cloud** button to connect the Jira Cloud app

|

||||||

|

|

||||||

3. Click the `accept` button.<br>

|

3. Click the `accept` button.<br>

|

||||||

{width=384}

|

{width=384}

|

||||||

|

|

||||||

4. After installing the app, you will be redirected to the Qodo Merge registration page. and you will see a success message.<br>

|

4. After installing the app, you will be redirected to the Qodo Merge registration page. and you will see a success message.<br>

|

||||||

{width=384}

|

{width=384}

|

||||||

|

|

@ -448,3 +479,49 @@ Name your branch with the ticket ID as a prefix (e.g., `ABC-123-feature-descript

|

||||||

```

|

```

|

||||||

|

|

||||||

Replace `[ORG_ID]` with your Linear organization identifier.

|

Replace `[ORG_ID]` with your Linear organization identifier.

|

||||||

|

|

||||||

|

## Monday Integration 💎

|

||||||

|

|

||||||

|

### Monday App Authentication

|

||||||

|

The recommended way to authenticate with Monday is to connect the Monday app through the Qodo Merge portal.

|

||||||

|

|

||||||

|

Installation steps:

|

||||||

|

|

||||||

|

1. Go to the [Qodo Merge integrations page](https://app.qodo.ai/qodo-merge/integrations)

|

||||||

|

2. Navigate to the **Integrations** tab

|

||||||

|

3. Click on the **Monday** button to connect the Monday app

|

||||||

|

4. Follow the authentication flow to authorize Qodo Merge to access your Monday workspace

|

||||||

|

5. Once connected, Qodo Merge will be able to fetch Monday ticket context for your PRs

|

||||||

|

|

||||||

|

### Monday Ticket Context

|

||||||

|

`Ticket Context and Ticket Compliance are supported for Monday items, but not yet available in the "PR to Ticket" feature.`

|

||||||

|

|

||||||

|

When Qodo Merge processes your PRs, it extracts the following information from Monday items:

|

||||||

|

|

||||||

|

* **Item ID and Name:** The unique identifier and title of the Monday item

|

||||||

|

* **Item URL:** Direct link to the Monday item in your workspace

|

||||||

|

* **Ticket Description:** All long text type columns and their values from the item

|

||||||

|

* **Status and Labels:** Current status values and color-coded labels for quick context

|

||||||

|

* **Sub-items:** Names, IDs, and descriptions of all related sub-items with hierarchical structure

|

||||||

|

|

||||||

|

### How Monday Items are Detected

|

||||||

|

Qodo Merge automatically detects Monday items from:

|

||||||

|

|

||||||

|

* PR Descriptions: Full Monday URLs like https://workspace.monday.com/boards/123/pulses/456

|

||||||

|

* Branch Names: Item IDs in branch names (6-12 digit patterns) - requires `monday_base_url` configuration

|

||||||

|

|

||||||

|

### Configuration Setup (Optional)

|

||||||

|

If you want to extract Monday item references from branch names or use standalone item IDs, you need to set the `monday_base_url` in your configuration file:

|

||||||

|

|

||||||

|

To support Monday ticket referencing from branch names, item IDs (6-12 digits) should be part of the branch names and you need to configure `monday_base_url`:

|

||||||

|

```toml

|

||||||

|

[monday]

|

||||||

|

monday_base_url = "https://your_monday_workspace.monday.com"

|

||||||

|

```

|

||||||

|

|

||||||

|

Examples of supported branch name patterns:

|

||||||

|

|

||||||

|

* `feature/123456789` → extracts item ID 123456789

|

||||||

|

* `bugfix/456789012-login-fix` → extracts item ID 456789012

|

||||||

|

* `123456789` → extracts item ID 123456789

|

||||||

|

* `456789012-login-fix` → extracts item ID 456789012

|

||||||

|

|

|

||||||

19

docs/docs/core-abilities/high_level_suggestions.md

Normal file

19

docs/docs/core-abilities/high_level_suggestions.md

Normal file

|

|

@ -0,0 +1,19 @@

|

||||||

|

# High-level Code Suggestions 💎

|

||||||

|

|

||||||

|

`Supported Git Platforms: GitHub, GitLab, Bitbucket Cloud, Bitbucket Server`

|

||||||

|

|

||||||

|

## Overview

|

||||||

|

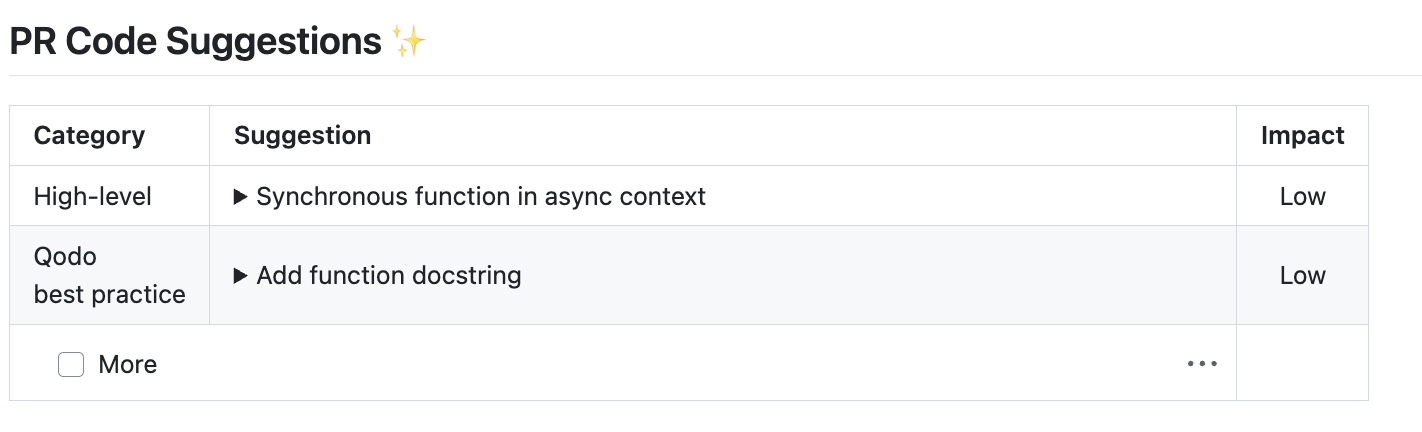

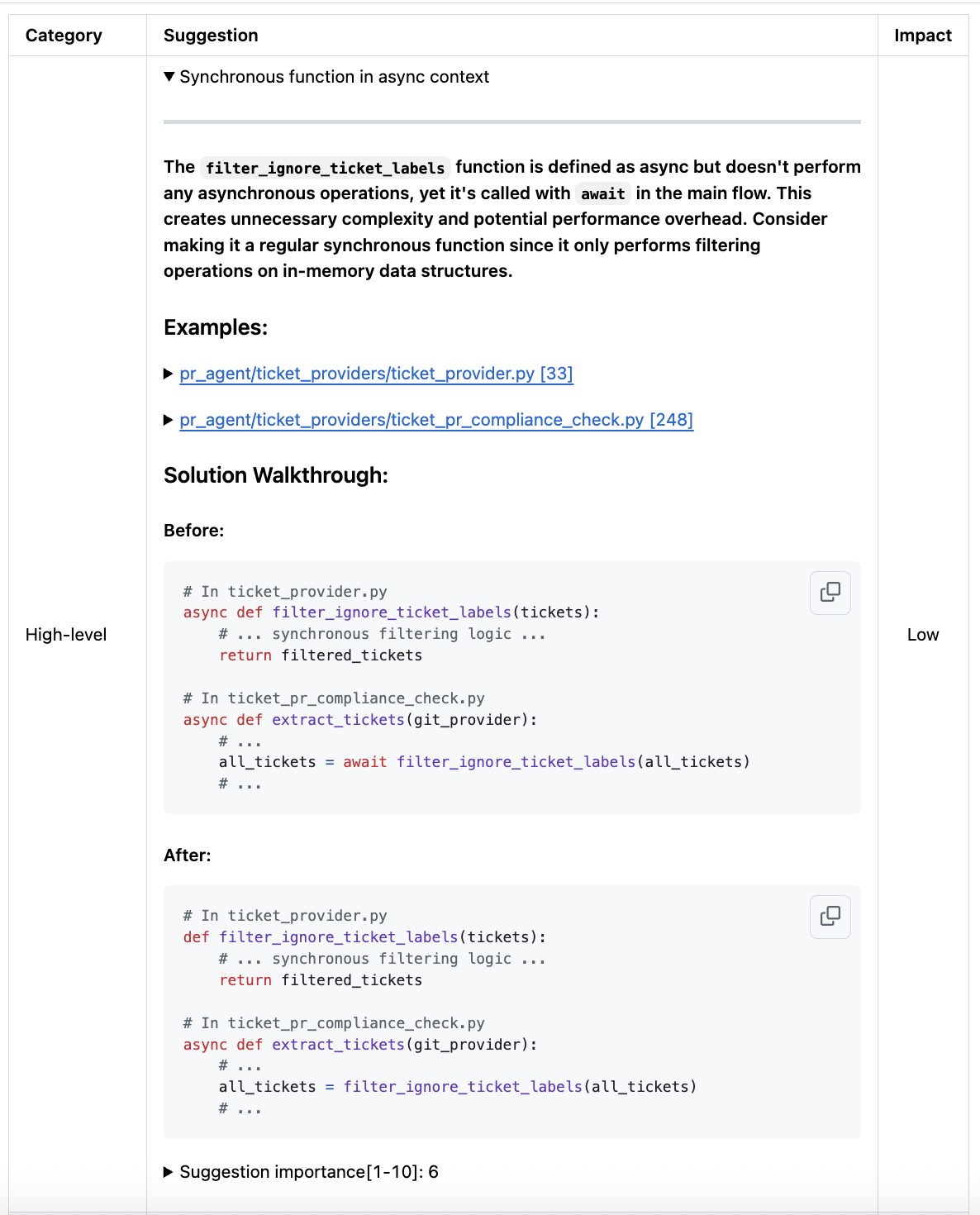

High-level code suggestions, generated by the `improve` tool, offer big-picture code suggestions for your pull request. They focus on broader improvements rather than local fixes, and provide before-and-after code snippets to illustrate the recommended changes and guide implementation.

|

||||||

|

|

||||||

|

### How it works

|

||||||

|

|

||||||

|

=== "Example of a high-level suggestion"

|

||||||

|

{width=512}

|

||||||

|

{width=512}

|

||||||

|

|

||||||

|